by Shawn Bower

Opsworks is a service provided by AWS to provide configuration management for EC2 instances. The services relies on Chef and allows users to create and upload their own cookbooks. For this purpose of this blog we will assume a familiarity with chef, for those who are new to chef please check out their documentation. The first thing we need to do is setup our cookbooks. First lets get the cookbook for NFS.

Cool now we have the cookbook for nfs. When using Opsworks you have to specify all the cookbooks in the root of the repository. We will need to move into the nfs directory, resolve all its dependencies and move them to the root.

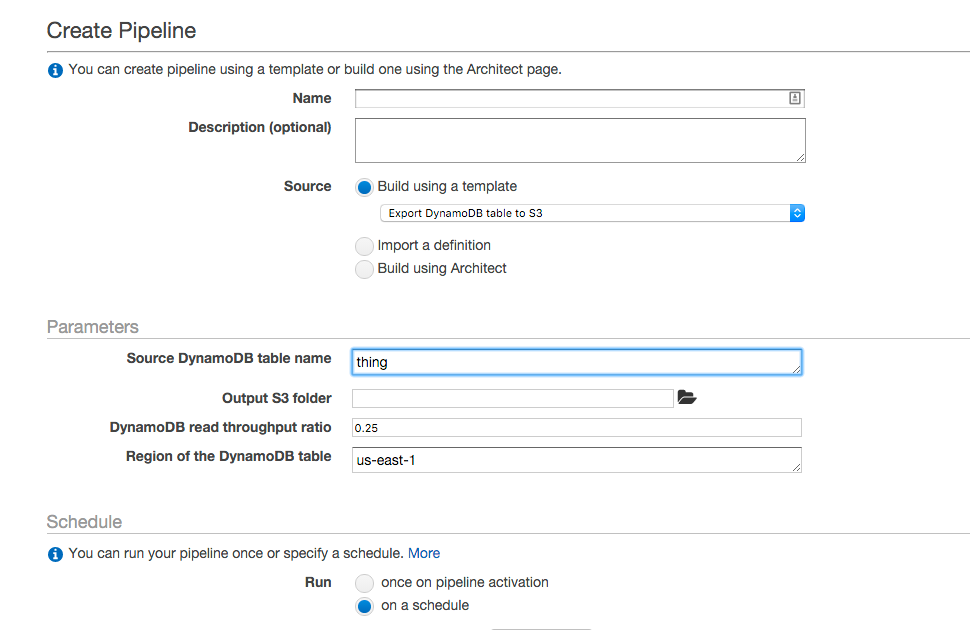

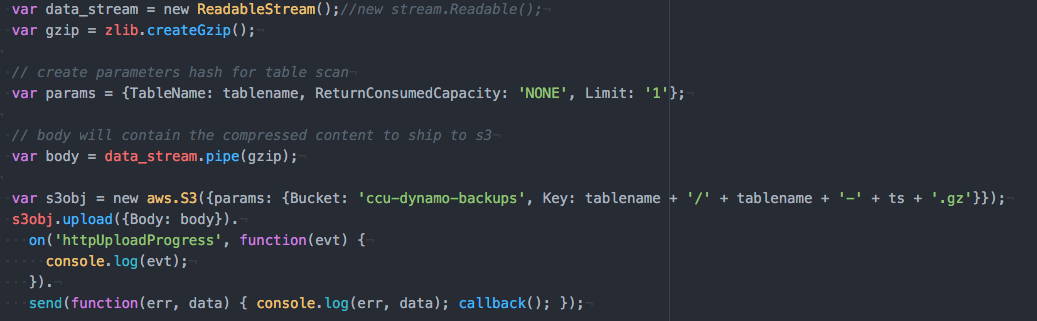

Now that we have the nfs cookbook with all its dependencies we can configure our server. We now have two options to get this to Opsworks we can either upload a zip of the root folder to s3 or we can upload the contents to a git repository. For this demonstration lets upload what we have to a github repository. Now we can login into the AWS console and navigate to Opsworks. The first step we have to go through is to create a new stack. A stack represents a logical grouping of instances, it could be an application or set of applications. When creating our stack we won’t want to call it NFS as its likely that the our NFS server is only one piece of the stack. We will want to use the latest version of chef which is Chef 12, the chef 11 stack is being phased out. In order to use our custom cookbooks we will select the “Yes” button and add our github repository.

One of the first things we will want to do is allow our IAM user to access to these machines. We will be able to import users from IAM and control their access to the stack as well as their access to the instances we will create. Each user can set a public ssh key to use for access to instances in this stack.

The next step is to create a layer, this represents a specific instance class. In this case we will want the layer to represent our NFS sever. Click add a layer and set the name and short name to “nfs”. From the layer we can control network settings such as EIPs or ELBS, EBS volumes to create and mount and we can add security groups.

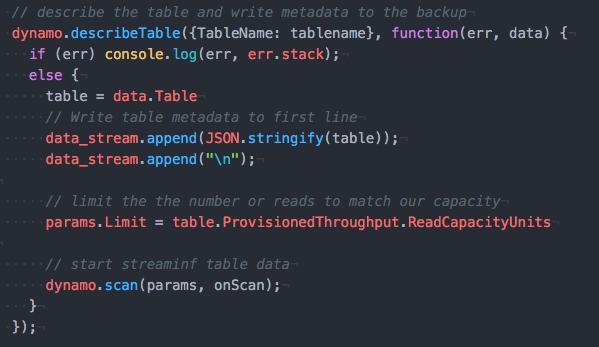

Once we have the layer we can add our recipes. When adding recipes we have to choose the lifecycle to add it to. There are five phases to the lifecycle and in our case it makes sense to add the nfs recipe to the setup phase. This will run when the instance is started and finished booting.

Now that we have our stack setup and we have add a layer we can add instances to that layer. Let’s add an instance using the default settings and an instance type of t2.medium. Once the instance is create we can start it up. Once the server is online we can login and verify that the nfs service is running.

From above we can see the logs of the setup phase showing that nfs is part of the run list. We can login to the machine since we setup our user earlier giving it ssh access. The bare minimum to run an NFS server are now installed, to take this further we can configure what directories to export. In a future post we will explore expanding this layer.